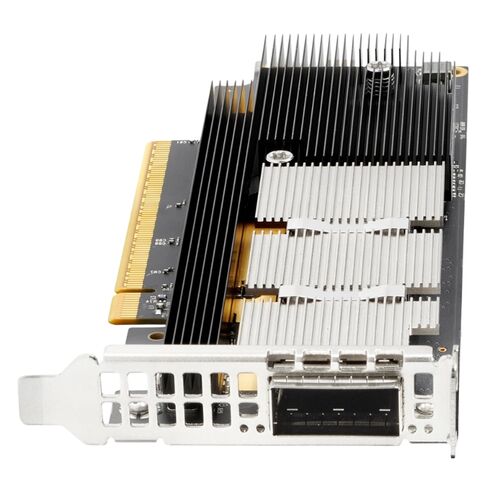

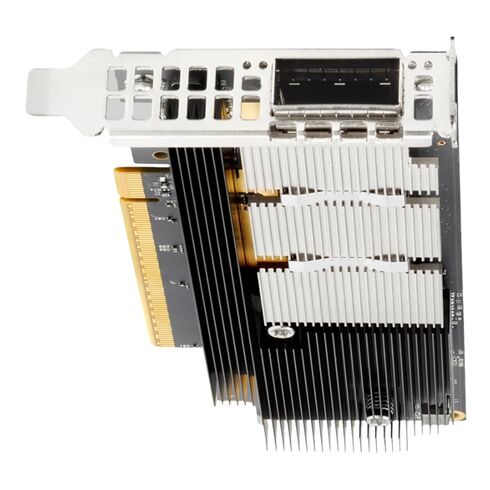

S1J00A HPE Cray SC 200GB PCI-E Single-Port FIO Adapter

- — Free Ground Shipping

- — Min. 6-month Replacement Warranty

- — Genuine/Authentic Products

- — Easy Return and Exchange

- — Different Payment Methods

- — Best Price

- — We Guarantee Price Matching

- — Tax-Exempt Facilities

- — 24/7 Live Chat, Phone Support

- — Visa, MasterCard, Discover, and Amex

- — JCB, Diners Club, UnionPay

- — PayPal, ACH/Bank Transfer (11% Off)

- — Apple Pay, Amazon Pay, Google Pay

- — Buy Now, Pay Later - Affirm, Afterpay

- — GOV/EDU/Institutions PO's Accepted

- — Invoices

- — Deliver Anywhere

- — Express Delivery in the USA and Worldwide

- — Ship to -APO -FPO

- — For USA - Free Ground Shipping

- — Worldwide - from $30

HPE S1J00A Cray SC Network Adapter

The HPE S1J00A Cray SC Infiniband NDR 200GB PCI-E Single-Port FIO Optical Fiber Network Adapter is engineered for high-performance computing environments. Designed to deliver exceptional bandwidth and low latency, this adapter ensures seamless data transfer across demanding workloads.

Product Information

- Brand Name: HPE

- Part Number: S1J00A

- Product Type: Single-Port Network Adapter

Extended Information

- Plug-in card design

- Compact structure suitable for enterprise servers

- Engineered for durability and consistent performance

- PCI Express 5.0 x16 interface

- Delivers ultra-fast data throughput

- Supports modern server architectures

Media Type Supported

- Optical Fiber connectivity

- Enhanced signal integrity and reliability

- Ideal for long-distance, high-bandwidth communication

Expansion Slot Type

- OSFP (Octal Small Form Factor Pluggable)

- Supports advanced optical modules

- Designed for scalability and flexibility

Total Number of Expansion Slots

- One dedicated slot available

- Ensures streamlined configuration

- Reduces complexity in system design

Expansion Slot Details

- 1 x Expansion Slot

- Provides secure and stable module integration

- Supports high-performance optical transceivers

HPE S1J00A Infiniband Adapter Overview

The HPE S1J00A Cray SC Infiniband NDR 200GB PCI-E Single-Port FIO Optical Fiber Network Adapter represents a specialized category of ultra-high-performance interconnect hardware designed for next-generation data centers, scientific computing clusters and hyperscale enterprise deployments. This category encompasses advanced optical fiber network adapters engineered to deliver deterministic latency, exceptional bandwidth efficiency, and scalable node-to-node communication across distributed computing ecosystems. Organizations deploying high-performance computing platforms increasingly rely on network adapters capable of eliminating communication bottlenecks between processors, storage layers, and accelerator resources. Within modern computational environments where workloads involve massive parallelism, traditional Ethernet-based networking often becomes insufficient due to latency overhead and throughput limitations. The category of Cray SC Infiniband NDR adapters addresses these challenges through advanced transport acceleration, direct memory access optimization, and hardware-level protocol handling that dramatically improves application response time. Systems leveraging PCI-Express expansion architectures benefit from seamless integration with compute nodes while maintaining minimal CPU intervention during data transfer operations.

Architectural Role

The emergence of NDR-class Infiniband networking signifies a major advancement in cluster interconnect design. This category focuses on adapters capable of sustaining 200 gigabits per second throughput using optical fiber connectivity, enabling massive data exchanges across compute fabrics without introducing congestion or communication imbalance. High-density compute clusters depend on tightly synchronized workloads, and the networking layer becomes critical for ensuring predictable performance across distributed applications. Infiniband adapters operating within this category leverage Remote Direct Memory Access technology to allow memory-to-memory transfers between servers without interrupting operating system processes. Such functionality dramatically reduces processing overhead while maintaining extremely low latency communication. As computational nodes scale into thousands or tens of thousands, the network adapter becomes the backbone component responsible for maintaining performance uniformity across the entire infrastructure.

PCI-Express Integration

The PCI-E interface implemented in this category ensures high-speed internal communication between the adapter and host system architecture. PCI-Express lanes provide sufficient bandwidth headroom to support full utilization of 200GB Infiniband throughput without internal bottlenecks. Data flows efficiently between GPUs, CPUs, memory subsystems, and storage arrays through optimized DMA engines embedded directly within the network controller. This hardware-level acceleration significantly improves application scalability, particularly for workloads involving distributed simulations, climate modeling, computational fluid dynamics, genomics analysis, and deep learning training environments. The adapter category emphasizes zero-copy data transfers and intelligent queue management to maintain uninterrupted packet delivery even under sustained heavy workloads.

Single-Port Optical Design Advantages

The single-port optical fiber configuration within this adapter category delivers focused performance efficiency while minimizing physical complexity inside dense server chassis. Optical connectivity provides enhanced signal integrity over extended distances compared to copper alternatives, allowing large-scale cluster deployments across racks and rows without degradation in throughput or latency consistency. Optical fiber transmission also improves electromagnetic interference resistance, which is essential within densely populated data center environments operating at extreme computational loads. Reduced thermal output associated with optical networking further contributes to data center efficiency by lowering cooling requirements.

Scalable Infrastructure

This category of network adapters enables efficient communication between GPU nodes participating in parallel model training operations. Training large neural networks requires constant exchange of parameter updates between compute nodes, and latency inconsistencies can significantly reduce overall training efficiency. NDR 200GB connectivity ensures rapid gradient synchronization while maintaining stable throughput across interconnected servers. Hardware-based congestion control mechanisms dynamically regulate traffic flow, preventing packet loss and retransmission delays that typically degrade performance in large distributed training environments. As organizations transition toward data-driven analytics and AI-powered decision systems, the importance of reliable low-latency networking continues to expand.

High-Bandwidth

GPU acceleration environments require ultra-fast communication channels capable of supporting collective operations such as all-reduce, broadcast, and gather functions. Network adapters within this category optimize these communication patterns through advanced offloading technologies that minimize processor involvement. By transferring communication responsibilities directly to the adapter hardware, computational resources remain dedicated to processing workloads rather than managing network operations. Organizations implementing large-scale AI infrastructure rely heavily on these networking capabilities to maximize hardware utilization efficiency.

Enterprise Data Center

Modern enterprise data centers increasingly transition toward fabric-based infrastructure models where compute, storage, and networking resources operate as unified pools. This adapter category plays a central role in enabling fabric architectures capable of dynamically allocating resources according to workload requirements. High-speed interconnects allow applications to access remote resources with latency characteristics similar to local hardware access. Integration within composable infrastructure environments ensures seamless scalability without requiring disruptive hardware redesign. Enterprises implementing virtualization, container orchestration, and software-defined infrastructure frameworks depend on reliable interconnect solutions capable of supporting rapid workload mobility across servers.

Compatibility

Enterprise virtualization platforms demand networking solutions capable of supporting high-density virtual machine deployment while maintaining consistent performance isolation. Adapters within this category incorporate virtualization acceleration features that enable efficient network resource sharing across multiple virtual environments. Hardware-assisted packet processing ensures tenant workloads remain isolated while still benefiting from maximum throughput availability. Cloud service providers deploying private or hybrid cloud models rely on these capabilities to maintain predictable service-level performance across diverse customer workloads. Efficient traffic segmentation and queue management contribute to stable network behavior even under fluctuating demand conditions.

Operational Efficiency

Energy efficiency has become a significant consideration within modern data centers. Optical fiber-based Infiniband adapters reduce power consumption per transmitted bit compared to legacy networking technologies. Thermal efficiency further enhances sustainability goals by minimizing cooling demands associated with high-speed networking equipment. Data center operators seeking environmentally responsible infrastructure solutions often adopt advanced interconnect technologies that balance performance requirements with energy optimization strategies.

Flexibility Across Industry

Each sector requires reliable high-speed communication to process increasingly complex datasets and analytical workloads. Financial institutions leverage ultra-low latency networking to accelerate risk modeling and algorithmic analysis. Healthcare research organizations depend on rapid genomic data processing capabilities enabled by distributed computing clusters. Energy companies performing seismic imaging rely on sustained bandwidth performance to analyze massive geological datasets efficiently.