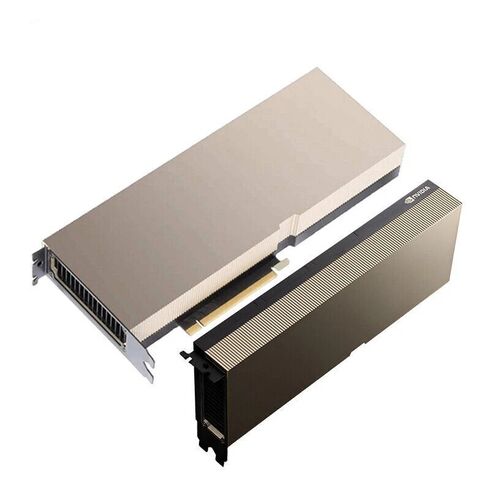

900-21010-0140-030 Nvidia H200 141GB HBM3e PCIe Gen 5X16 GPU

- — Free Ground Shipping

- — Min. 6-month Replacement Warranty

- — Genuine/Authentic Products

- — Easy Return and Exchange

- — Different Payment Methods

- — Best Price

- — We Guarantee Price Matching

- — Tax-Exempt Facilities

- — 24/7 Live Chat, Phone Support

- — Visa, MasterCard, Discover, and Amex

- — JCB, Diners Club, UnionPay

- — PayPal, ACH/Bank Transfer (11% Off)

- — Apple Pay, Amazon Pay, Google Pay

- — Buy Now, Pay Later - Affirm, Afterpay

- — GOV/EDU/Institutions PO's Accepted

- — Invoices

- — Deliver Anywhere

- — Express Delivery in the USA and Worldwide

- — Ship to -APO -FPO

- — For USA - Free Ground Shipping

- — Worldwide - from $30

Highlights of NVIDIA 900-21010-0140-030 141GB HBM3e GPU

The NVIDIA H200 NVL GPU delivers next-generation acceleration for AI, data science, HPC, and large-scale computing environments. Engineered with cutting-edge HBM3e memory and PCIe Gen 5.0 connectivity, this accelerator ensures exceptional throughput and advanced workload efficiency.

General Information

- Manufacturer: Nvidia

- Part Number: 900-21010-0140-030

- Product Category: Graphics Processing Unit (GPU)

- Sub Type Type: H200 141GB HBM3e

Technical Specifications

- Graphics Engine: NVIDIA H200 NVL

- PCI Interface: PCI Express Gen 5.0 x16

- Confidential Computing: Supported

- Thermal Design Power (TDP): Configurable up to 600W

- PCIe Gen 5.0 bandwidth: 128GB/s

- 141GB of next-gen HBM3e Graphics Memory

- Massive 4.8 TB/s memory bandwidth for extreme data throughput

- Up to 4 PFLOPS of FP8 computational performance

- 2× LLM inference acceleration compared to previous generations

- 110× HPC processing improvement

- Memory Technology: HBM3e (High Bandwidth Memory)

Overview of Nvidia 900-21010-0140-030 H200 141GB HBM3e

The Nvidia 900-21010-0140-030 H200 141GB HBM3e NVL Tensor Core PCIe Gen 5X16 GPU represents a major leap in accelerated computing, offering an immense boost in memory bandwidth, next-generation tensor performance, multi-GPU scalability and high-throughput parallelism for modern artificial intelligence, scientific workloads and enterprise-level data center environments. As part of Nvidia’s advanced Hopper architecture lineup, the H200 GPU delivers huge improvements in advanced HBM3e memory systems, enhanced transformer engine throughput, large-scale compute efficiency and optimized PCIe Gen 5X16 connectivity.

Architecture Enhancements of the Nvidia H200 GPU

The architecture of the Nvidia H200 GPU builds upon the innovative foundations established in the Hopper series, pushing performance boundaries across tensor workloads, parallel compute processes and multi-node scalability. This advanced GPU utilizes an optimized streaming multiprocessor array, expanded cache architecture, improved warp scheduling and a transformer engine engineered specifically for LLM training and inference. Through the use of mixed-precision compute modes, FP8 optimizations, FP16 tensor throughput and enhanced memory partitioning, the H200 GPU ensures that workloads operate at peak efficiency across thousands of concurrent operations.

HBM3e Memory System Advantages

The 141GB HBM3e memory configuration of the Nvidia H200 GPU allows data-intensive AI models, transformer pipelines, generative workloads, simulation engines and HPC operations to run at extraordinary speed, seamlessly handling massive working datasets without requiring excessive memory paging or offloading. HBM3e technology dramatically improves memory density, reducing the physical footprint while exponentially increasing bandwidth throughput. This results in faster training cycles, shorter inference times and smoother overall handling of multi-billion parameter models. The memory system is engineered for extreme stability even under persistent heavy workloads, maintaining consistent bandwidth and responsiveness.

Transformer Engine

The H200 GPU integrates a refined transformer engine capable of accelerating modern transformer-based AI architectures, including large language models, deep neural networks and retrieval-augmented generation systems. By leveraging FP8 precision modes and hybrid precision techniques, the transformer engine boosts throughput while maintaining accuracy. Memory partitioning optimizes the rapid flow of data across attention heads, sequence layers, embedding matrices and decoder blocks, enabling faster parallel computation.

Workload Flexibility HPC and Scientific Computing

The Nvidia H200 GPU supports an extensive variety of workloads, from deep learning and artificial intelligence applications to traditional HPC simulations and scientific modeling. Its architecture seamlessly handles Monte Carlo simulations, molecular dynamics, computational fluid dynamics, physics modeling, weather prediction, seismic analysis and other scientific domains requiring extremely high compute throughput. For enterprise environments, the GPU excels in data analytics, real-time inference engines, multi-GPU model training pipelines, recommendation engines and vector search optimization. Its ability to adapt to mixed workloads ensures exceptional return on investment for organizations scaling AI and HPC operations simultaneously.

PCIe Gen 5X16 Connectivity and Bandwidth

The PCIe Gen 5X16 interface allows for incredible bandwidth capabilities that support high-speed communication with CPUs, NVMe storage arrays and multi-GPU server backplanes. This interface doubles data transfer rates compared to PCIe Gen 4, ensuring minimal bottlenecks between system memory and GPU compute fabrics. PCIe Gen 5 reduces latency across large data flows, benefiting inference workloads, multi-instance GPU partitioning and distributed training pipelines. By enabling smoother communication paths, the H200 GPU offers stable behavior under extremely high I/O workloads, which is essential for multi-GPU clusters and large AI deployments.

Performance Scaling Through Nvidia NVL Multi-GPU Technology

The Nvidia H200 GPU integrates NVL technology, an advanced multi-GPU interconnect system that allows multiple H200 GPUs to operate as a unified high-bandwidth compute engine. NVL technology enhances distributed computing workloads, accelerates large-scale deep learning training pipelines and improves communication efficiency across GPUs. Multi-node scaling ensures that high-performance computing clusters maintain consistent computational throughput. This capability is especially critical for training extremely large language models with trillions of parameters, as the inter-GPU communication paths play a significant role in maintaining stable performance across distributed nodes.

Advanced Enhancements

With data center security becoming increasingly important, the H200 GPU integrates hardware-level security enhancements that support secure execution environments, cryptographic acceleration and memory isolation. These security features protect sensitive AI training datasets, enterprise operational data and inference pipelines from potential vulnerabilities. The GPU also includes advanced monitoring tools that allow administrators to track utilization, temperature patterns, power draw and overall integrity throughout its operational lifecycle.

Multi-Instance GPU

The Nvidia H200 GPU offers robust virtualization support, enabling partitioning into multiple MIG (Multi-Instance GPU) instances that allow various workloads to run independently on the same GPU. This feature is ideal for cloud data centers, shared inference platforms and environments requiring predictable performance profiles for different users or applications. Each MIG instance operates with dedicated compute cores, memory slices and cache allocations, guaranteeing isolated, consistent performance per instance. This approach maximizes hardware utilization while enabling flexible deployment configurations.

Enterprise Deployment Scenarios

The H200 GPU is engineered for multiple deployment scenarios, including high-density blade server environments, rack-mounted AI servers, enterprise GPU clusters, hyperscale cloud platforms, edge computing environments and advanced research institutions. Many organizations deploy the GPU for AI model serving, data analytics processing, robotic systems, autonomous operational systems and medical imaging acceleration. The GPU’s architecture ensures that it remains a strong performer regardless of whether workloads are compute-heavy, memory-heavy or reliant on high-speed communication.

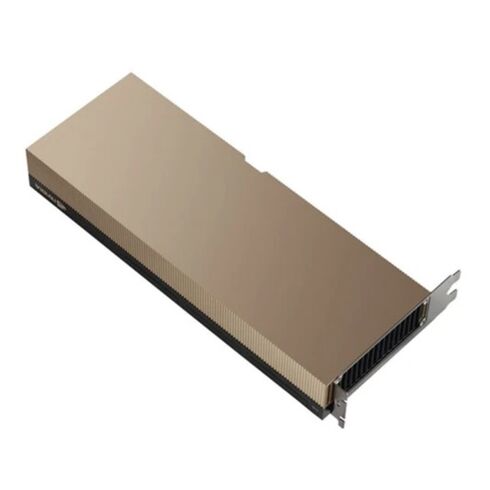

Reliability and Operational Lifespan

The robust design of the Nvidia 900-21010-0140-030 H200 GPU ensures a prolonged operational lifespan under enterprise conditions. The card is designed to handle 24/7 workloads, providing consistent performance for long-term deployments. Power stability, improved component resilience and advanced thermal controls reduce the risk of operational failures. The GPU passes extensive validation processes to ensure it meets data center reliability standards, offering assurances of its stability even when running at maximum compute levels continuously.

Use Cases for Modern Large-Scale

The H200 GPU is specifically optimized for large-scale AI applications such as large language model training, generative AI workloads, multimodal learning architectures, computer vision model pipelines, AI agents, retrieval-augmented inference, reinforcement learning and more. These workloads benefit immensely from the GPU’s advanced tensor performance, massive memory bandwidth and ability to efficiently process large datasets across multiple parallel threads. Enterprises relying on AI automation, predictive analytics, conversational agents, real-time inference engines and model-serving workflows see significant performance improvements with H200 GPU adoption.

Cloud Deployment Integration

The Nvidia H200 integrates smoothly with public cloud platforms, private cloud systems and hybrid infrastructures. Its hardware virtualization features align with cloud orchestration frameworks, enabling compute nodes to scale dynamically based on demand. The GPU’s consistent performance profile ensures that cloud workloads run efficiently regardless of tenant complexity or network overhead. Because of its PCIe Gen 5 compatibility, cloud providers are able to deploy high-density configurations that maximize GPU availability while ensuring predictable throughput for enterprise clients.

Data Analytics and Enterprise Software

The GPU enhances enterprise data analytics capabilities by accelerating SQL engines, business intelligence workloads, operational dashboards and real-time data flow processing. With RAPIDS acceleration, data transformation operations become substantially faster. Moreover, its memory bandwidth and tensor engine enable enterprise software systems to handle massive datasets used for forecasting, behavioral analytics, fraud detection and personalization engines.

Research and Scientific

Scientific research institutions depend on high-performance computational engines to model chemical interactions, simulate planetary systems, run DNA sequencing analysis and conduct high-resolution medical imaging. The H200 GPU’s high-bandwidth memory and compute efficiency make it a prime choice for scientific visualization work, volumetric rendering, simulation acceleration and complex mathematical modeling. Its architectural efficiency enables quick iteration cycles for researchers needing to run repetitive analysis or multi-stage simulation work.

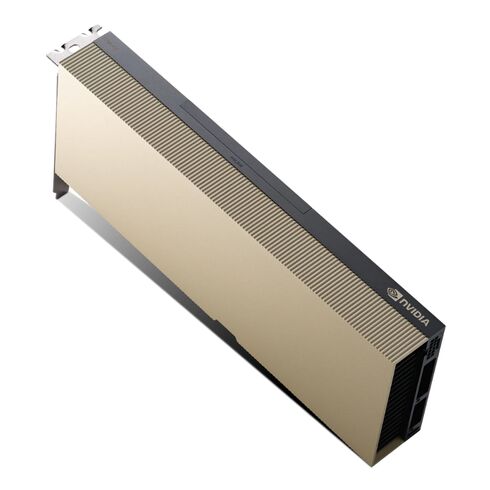

Hardware Integration

The GPU uses sophisticated thermal design structures to maintain cool, stable operation throughout workloads reaching maximum sustained performance. Airflow channels, thermal pads, heat spreaders and optimized power delivery modules allow the GPU to operate efficiently under high strain. Its compatibility with enterprise cooling solutions ensures that server manufacturers can integrate the GPU into both air-cooled and liquid-cooled chassis. Proper hardware integration ensures optimal operating temperatures, extending component lifespan and maximizing workload stability.

Server Overview

The Nvidia H200 GPU works with server platforms supporting PCIe Gen 5X16 slots and high-power, high-bandwidth GPU requirements. Compatible servers typically include AI-optimized rack servers, multi-GPU systems, HPC compute nodes, cloud infrastructure servers and specialized GPU platforms. Many enterprise vendors release BIOS and firmware updates to ensure full performance optimization with H200-class GPUs. When deployed in a multi-GPU configuration, systems must provide the necessary cooling capacity and power delivery stability to support continuous, large-scale compute demands.

Scalability in Distributed GPU Environments

The GPU excels in distributed computing environments where scalability is essential. NVLink and NVL interconnect architectures allow servers to form closely integrated GPU clusters that process massive workload partitions cooperatively. This environment significantly accelerates distributed training, allowing multi-node clusters to efficiently handle extremely large training cycles. The GPU’s hardware features enable communication scaling across GPU clusters, preserving performance in highly parallelized distributed workloads.

Model Training Acceleration

Model training cycles benefit from the GPU’s ability to accelerate memory-intensive processes, reduce training convergence time and handle extremely large batch sizes. With HBM3e memory and enhanced tensor cores, the GPU supports rapid iteration cycles for organizations developing advanced language models, vision transformers, diffusion models and other AI frameworks. Training processes involving sequence modeling, reinforcement learning, multimodal architecture development and neural network refinement all experience improved performance metrics when scaled across multiple H200 GPUs.

Inference Production Environments

Inference workloads require fast response times, predictable throughput and low-latency pipeline execution. The H200 GPU accelerates inference operations across transformer-based architectures, computer vision pipelines and LLM-based generative systems. Its memory bandwidth and tensor acceleration allow it to handle large tokens, extended context windows and high concurrency across many simultaneous inference requests. Enterprises deploying AI in customer-facing environments gain significant reliability advantages and performance improvements with H200-powered inference clusters.

Software Ecosystem Development

The Nvidia H200 GPU integrates into the full Nvidia software ecosystem, including CUDA, cuDNN, TensorRT, NCCL, Nsight profiling tools, Magnum IO and the Nvidia AI Enterprise Suite. These tools support optimized development workflows, model acceleration, distributed training orchestration, inference optimization and multi-GPU scaling operations. Frameworks such as PyTorch, TensorFlow, JAX and Megatron-LM are heavily optimized for Hopper architecture GPUs, ensuring seamless integration and performance scaling across large AI and HPC deployments.

Developer Workflow Enhancements

Developers benefit from streamlined workflows, memory optimization tools, mixed-precision acceleration and integrated profiling capabilities that reveal bottlenecks, memory usage statistics and compute efficiency metrics. The ecosystem support makes it easier to deploy optimized kernels, training jobs, inference graphs and distributed execution routines. These tools reduce time-to-deployment for AI models and ensure developers can efficiently manage large datasets, complex graph structures and high-frequency update cycles.

Data Center Integration

Enterprise data centers rely on efficient management frameworks to handle monitoring, resource allocation, fault tolerance, workload scheduling and GPU cluster coordination. Nvidia provides extensive management tools, including GPU telemetry, cluster orchestration, thermal monitoring, energy usage analytics and predictive maintenance systems. These platforms ensure that even large GPU clusters running thousands of concurrent workloads operate predictably and efficiently.

Cloud Orchestration

The H200 GPU aligns with Kubernetes-based cloud environments, Slurm batch processing systems and distributed orchestration platforms used in both on-premises and cloud AI workflows. The GPU supports containerized deployments through Nvidia container runtime integration, allowing containerized AI workloads to execute across multi-node GPU clusters. This capability simplifies deployment and reduces scaling overhead for developers and enterprise operators managing large distributed compute environments.