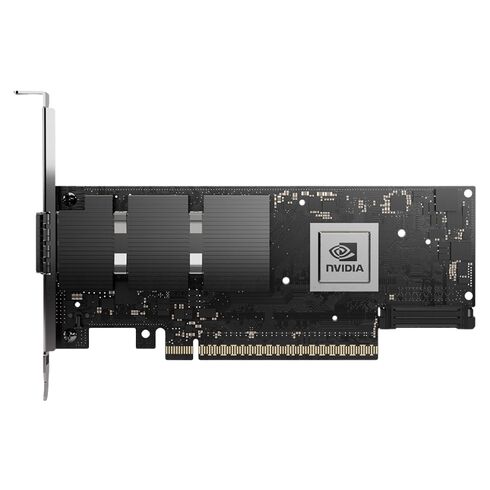

MCX715105AS-WEAT Nvidia ConnectX-7 400GbE NDR QSFP112 1 Port PCI-E Network Adapter

- — Free Ground Shipping

- — Min. 6-month Replacement Warranty

- — Genuine/Authentic Products

- — Easy Return and Exchange

- — Different Payment Methods

- — Best Price

- — We Guarantee Price Matching

- — Tax-Exempt Facilities

- — 24/7 Live Chat, Phone Support

- — Visa, MasterCard, Discover, and Amex

- — JCB, Diners Club, UnionPay

- — PayPal, ACH/Bank Transfer (11% Off)

- — Apple Pay, Amazon Pay, Google Pay

- — Buy Now, Pay Later - Affirm, Afterpay

- — GOV/EDU/Institutions PO's Accepted

- — Invoices

- — Deliver Anywhere

- — Express Delivery in the USA and Worldwide

- — Ship to -APO -FPO

- — For USA - Free Ground Shipping

- — Worldwide - from $30

Comprehensive Product Overview

The Nvidia MCX715105AS-WEAT belongs to the fourth-generation of high-performance InfiniBand and Ethernet adapters under the ConnectX‑7 product line. Designed to meet the insatiable bandwidth demands of modern accelerated computing, this adapter fuses 400Gb/s connectivity with PCIe 5.0, setting a new benchmark for data throughput and smart offloads.

Main Specifications

- Manufacturer: Nvidia

- Part Number: MCX715105AS-WEAT

- Product Type: Single-Port PCIe Network Adapter

Technical Specifications

- Line: ConnectX-7

- PCI Express 5.0 x16 slot

- Backward compatibility with PCIe 4.0 and 3.0

- 1x Ethernet/Infiniband QSFP112 port

- Total network ports: 1

- Expansion slot type: QSFP112

Interface Details

PCI Express

- Gen 5.0/4.0 SerDes @ 16/32 GT/s, x16 lanes

- Optional PCIe x16 Gen 4.0 via auxiliary passive card

Networking

- Single QSFP112 supporting both Infiniband and Ethernet

Data Transfer Rates

- Infiniband: NDR, NDR200, HDR, HDR100, EDR, FDR, SDR

- Ethernet: 400/200/100/50/25/10 Gb/s

Protocol Compatibility

- Infiniband IBTA v1.5a

- Auto-negotiation across NDR, HDR, EDR, FDR, SDR lanes

- Ethernet standards: 400GAUI-4, 200GAUI-2/4, 100GAUI-1/2, 50GAUI-1/2, 40GBASE, 25GBASE, 10GBASE, 1000BASE-CX, CAUI-4, XLaui, XLPPi, SFI

Capabilities

- Secure Boot enabled

- Cryptographic functions disabled

Power Requirements

- Voltage: 12V, 3.3Vaux

- Max current: 100mA

- Typical consumption: 19.6W with passive cables in PCIe Gen 5.0 x16

Environmental Conditions

- Operating temperature: 0°C to 55°C

- Storage temperature: -40°C to 70°C

- Humidity (operational): 10% to 85%

- Humidity (non-operational): 10% to 90%

Nvidia MCX715105AS-WEAT 1 Port PCI-E Network Adapter

The Nvidia MCX715105AS-WEAT ConnectX-7 1 Port 400GbE NDR QSFP112 InfiniBand PCI-Express 5.0 x16 Network Adapter represents an advanced class of high-performance networking interfaces engineered for ultra-low latency, massive throughput, and enterprise-grade scalability. This category of adapters is purpose-built for modern data centers, hyperscale infrastructures, artificial intelligence clusters, high-performance computing environments, and mission-critical enterprise deployments that demand extreme bandwidth with deterministic performance. Designed using cutting-edge silicon architecture and optimized firmware acceleration engines, this adapter integrates advanced networking, storage acceleration, and security processing into a single hardware platform capable of handling the most demanding workloads.

Key Specifications

PCI Express 5.0 x16 Host Connectivity

The MCX715105AS-WEAT PCI Express 5.0 x16 interface implemented in this adapter category delivers exceptionally high host bandwidth, allowing servers to fully utilize the 400GbE network link without bus bottlenecks. PCIe Gen5 technology doubles throughput compared to PCIe Gen4, enabling faster DMA transfers, improved I/O responsiveness, and better multi-queue scaling. This design ensures consistent packet delivery rates even during peak load conditions in virtualized or containerized environments where multiple workloads compete for bandwidth.

Single-Port 400GbE NDR Link Technology

The single QSFP112 port supports 400 Gigabit Ethernet and NDR InfiniBand signaling, making this category suitable for unified fabrics that require both Ethernet and InfiniBand protocol support. With support for high-density optical or DAC interconnects, the adapter maintains signal integrity across short-range and long-range cabling infrastructures. The port architecture is optimized for high-speed serializer/deserializer performance and advanced forward error correction, ensuring reliable connectivity even in electrically noisy or thermally challenging rack environments.

QSFP112 Physical Layer Advantages

The MCX715105AS-WEAT QSFP112 transceiver format used in this category offers higher lane speeds and improved signal modulation efficiency compared to earlier QSFP generations. This allows greater data throughput within the same physical footprint, supporting higher rack density and reducing cabling complexity. The module design also enables hot-plug capability for simplified maintenance and scalable network expansion.

Performance

Ultra-Low Latency

This category of ConnectX-7 adapters integrates hardware-accelerated packet processing pipelines that minimize latency between host memory and network fabric. Advanced offload engines handle transport layer segmentation, checksum generation, encryption processing, and virtualization mapping directly on the adapter. By reducing CPU involvement, systems gain predictable latency characteristics and improved application responsiveness, particularly in distributed computing frameworks and latency-sensitive financial or scientific workloads.

Massive Throughput Capabilities

With support for 400Gbps line rate data transmission, the adapter is capable of sustaining extremely high packet rates across multiple simultaneous flows. Its internal switching fabric, high-speed buffer memory, and multi-queue architecture allow it to maintain full throughput under heavy workloads such as AI training clusters, parallel file system access, or real-time data analytics pipelines. Hardware scheduling logic dynamically balances traffic to prevent congestion and maintain fairness across queues.

High Optimization

The internal design supports millions of packets per second while preserving data integrity and timing accuracy. Specialized packet classification engines allow rapid inspection of headers for routing, filtering, and virtualization mapping, ensuring that each frame is delivered to the appropriate destination with minimal delay.

InfiniBand and Ethernet Convergence

Dual Protocol Fabric Support

This adapter category is engineered to operate seamlessly across both InfiniBand and Ethernet fabrics, providing flexibility for organizations that deploy mixed network architectures. InfiniBand mode delivers deterministic latency and high message rate capabilities ideal for HPC clusters, while Ethernet mode provides compatibility with existing data center infrastructure. The ability to switch between protocols ensures long-term investment protection and infrastructure adaptability.

RDMA Acceleration

Remote Direct Memory Access support allows direct memory-to-memory data transfers between systems without CPU intervention. This dramatically reduces latency and CPU overhead while increasing effective throughput. RDMA is particularly valuable for distributed storage platforms, clustered databases, and machine learning pipelines where rapid data movement is essential.

GPU and Accelerator Integration

The adapter’s architecture is optimized for high-speed communication between compute accelerators and network fabrics. Direct memory pathways and peer-to-peer communication capabilities enable GPUs or specialized processing units to exchange data directly across nodes without intermediate copying. This improves training times, simulation speed, and real-time processing efficiency.

Hardware Offload and Acceleration Engines

Transport Offload Features

Advanced transport offloads in this category reduce CPU overhead by handling segmentation, reassembly, checksum calculation, and packet classification directly on the network adapter. These capabilities free host processors for application workloads rather than networking tasks, resulting in improved system efficiency and lower operational costs.

Security Acceleration

Integrated encryption and decryption engines provide line-rate cryptographic processing for secure network traffic. Hardware-based security ensures that encrypted data streams can be processed without performance degradation, making this category suitable for environments requiring secure multi-tenant communication or protected data transfers.

Storage Protocol Acceleration

Support for hardware-accelerated storage networking protocols allows the adapter to handle storage traffic efficiently without burdening the host CPU. This capability enhances throughput and reliability for network-attached storage and distributed file systems.

Cloud Infrastructure Optimization

SR-IOV and Multi-Function

Single Root I/O Virtualization support allows multiple virtual machines or containers to share a single physical adapter while maintaining isolated performance paths. Each virtual function can be assigned dedicated queues, ensuring predictable performance for every workload regardless of system load.

Container Networking Efficiency

The adapter architecture is designed to integrate seamlessly with container orchestration platforms, enabling high-performance networking for microservices architectures. Hardware-based packet steering and isolation features ensure that containerized applications maintain consistent network performance even in highly dynamic environments.

Network Namespace Acceleration

Hardware mapping of network namespaces reduces overhead associated with software-based switching. This allows high-density container deployments to scale efficiently without sacrificing throughput or latency.

Scalability for Modern Data Centers

High-Density Deployment Capability

The single-port 400GbE design allows data centers to achieve extremely high aggregate bandwidth within limited rack space. Fewer adapters are required to reach desired throughput targets, reducing power consumption, cabling complexity, and cooling requirements.

Cluster Expansion Readiness

ConnectX-7 adapter category solutions support scalable cluster architectures where additional nodes can be added without network redesign. The hardware’s support for advanced routing, congestion control, and adaptive traffic management ensures stable performance as cluster size grows.

Topology Awareness

Integrated intelligence allows the adapter to adapt to different network topologies, optimizing packet routing and congestion handling. This capability improves reliability and performance consistency in large distributed environments.

Reliability and Data Integrity

Error Detection and Correction

Advanced error correction mechanisms ensure reliable data transmission even at extremely high speeds. These features monitor signal quality and automatically correct transmission errors, maintaining data integrity across long cable runs or high-density environments.

Power Efficiency

Efficient silicon design and intelligent power management enable the adapter to maintain high performance while minimizing heat output. Thermal sensors continuously monitor operating conditions and dynamically adjust performance parameters to maintain stability and prevent overheating.

Failover and Redundancy

The adapter supports advanced failover mechanisms that maintain network connectivity in case of link disruption. Redundant path capabilities and rapid recovery features ensure uninterrupted operation for mission-critical applications.

OCP and Standard PCIe Compatibility

The form factor is engineered to integrate seamlessly into servers that support PCIe x16 slots or compatible modular interfaces. The design ensures mechanical stability, efficient airflow, and secure electrical connectivity within high-density server chassis.

Connector Durability

The QSFP112 connector is engineered for repeated insertion cycles without signal degradation. Reinforced contact surfaces and precise alignment ensure long-term reliability even in frequently serviced systems.

Use Case Optimization

High-bandwidth interconnect capability makes this adapter category ideal for distributed AI training clusters where massive datasets must be transferred between nodes rapidly. The combination of RDMA, GPU direct communication, and low latency enables efficient model training and inference operations.

High-Performance Computing Clusters

Scientific simulations, weather modeling, genomic analysis, and other compute-intensive tasks benefit from the adapter’s deterministic latency and high message rate support. These features allow tightly coupled cluster nodes to communicate as though they were part of a single unified system.

Large-Scale Storage Networks

High throughput and hardware acceleration make the adapter suitable for distributed storage architectures requiring rapid data replication and retrieval. The ability to handle large volumes of storage traffic without CPU overhead improves overall system responsiveness.